Why AI Projects Fail and How to Get Them Right

AI has never been more accessible. In just the past few years, organizations have gone from experimenting with machine...

|

AI & DATA STRATEGY

|

|

|

Synaptiq helps you develop your AI and data strategy as well as accelerate your roadmap to achieve successful business outcomes. Assess your AI and data readiness so you can prioritize the gaps you need to fill.

|

| Read More ⇢ |

|

DATA LAKE

|

|

|

Synaptiq helps you unify structured and unstructured data into a secure, compliant data lake that powers AI, advanced analytics and real-time decision-making across your business.

|

| Read More ⇢ |

|

AI AGENTS & CHATBOTS

|

|

|

Synaptiq helps you create AI agents and chatbots that leverage your proprietary data to automate tasks, improve efficiency, and deliver reliable answers within your workflows.

|

| Read More ⇢ |

|

LEGAL SERVICES

|

|

|

Learn how Synaptiq helped a law firm cut down on administrative hours during a document migration project.

|

| Read the Case Study ⇢ |

|

GOVERNMENT/LEGAL SERVICES

|

|

|

Learn how Synaptiq helped a government law firm build an AI product to streamline client experiences.

|

| Read the Case Study ⇢ |

|

|

Mushrooms, Goats, and Machine Learning: What do they all have in common? You may never know unless you get started exploring the fundamentals of Machine Learning with Dr. Tim Oates, Synaptiq's Chief Data Scientist. You can read and visualize his new book in Python, tinker with inputs, and practice machine learning techniques for free. |

| Start Chapter 1 Now ⇢ |

By: Stephen Sklarew 1 Apr 6, 2026 5:26:39 PM

McKinsey estimates generative AI could automate 60–70% of knowledge worker activities, yet only 21% of organizations using it have fundamentally redesigned their workflows to capture that value, and the reason is rarely the technology itself. The bottleneck, almost always, is the organization surrounding it. A 2026 Betterworks survey of over 2,000 employees makes this concrete: 59% of executives believe they communicate a clear AI vision to their workforce, yet only 8% of employees agree: which means the gap between what leadership thinks is happening and what the workforce is actually experiencing is not a communication problem, it's a structural one, and it sits squarely in HR's domain.

Think about what actually kills an ecosystem: it's rarely a single catastrophic event, it's the slow mismatch between a species' hardwired behaviors and a climate that has quietly shifted around it. Workforce architecture has the same vulnerability. Stable roles, predictable skill half-lives, clearly drawn functional boundaries made complete sense in the environment they were designed for, and HR leaders built them well. The problem is that this unvarying environment doesn’t exist anymore, and talent processes optimized for that older climate increases the gap between organizational capability and market reality.

This article is about closing that gap through four specific systems:

- literacy development

- career progression

- hiring, and

- governance.

Adaptation should be something an organization does naturally and continuously, the way a healthy ecosystem absorbs change.

The organizational chart most companies still operate from was designed for a world where building software required years of specialized training, and where a marketing manager who wanted a new tool had to submit a ticket to IT and wait three months or more. That world is dissolving faster than most leadership teams have grasped.

In 2021, Gartner had predicted that a majority of technology products and services would be built by people who are not technology professionals, because the tools would finally have caught up to the ambition that non-technical employees always had. The doctor who saw a better way to handle patient intake, the operations lead who knew exactly where the workflow was breaking: they always had the ideas, they just never had the means. Now they do.

What this means structurally is that the clean lines separating engineering from operations, or product from finance, are eroding. “Citizen developers” are widely believed to outnumber professional developers at large enterprises now, and that ratio keeps shifting as AI coding tools make the gap between technical and non-technical increasingly arbitrary. And yet, according to the same Betterworks 2026 survey, executives are 7x more likely than individual contributors to say their team goals regularly include expectations around using AI tools. This highlights a massive accountability gap: leadership believes they’ve codified AI into the work, but for the vast majority of the workforce, AI remains an 'off-the-books' activity rather than a formal performance expectation.

For HR, this creates an urgent design problem, because most job descriptions, performance frameworks, and career ladders were built to reward depth inside a lane, rather than the ability to build across lanes. Organizations that keep optimizing for specialization risk moving too slowly and are actively working against the employees most capable of creating value. This shift toward "radical generalism" is already being architected at the highest levels of tech leadership. Microsoft CEO Satya Nadella has begun dismantling traditional functional silos, recently merging design, program management, and front-end engineering into a single, unified role known as "Full-Stack Builders." By moving away from hyper-specialized "order-takers" toward broader, AI-empowered roles, Nadella argues that we are moving from being "sole performers to orchestra conductors," where the primary skill is no longer just deep technical execution, but the ability to manage a "fleet of agents" across domains.

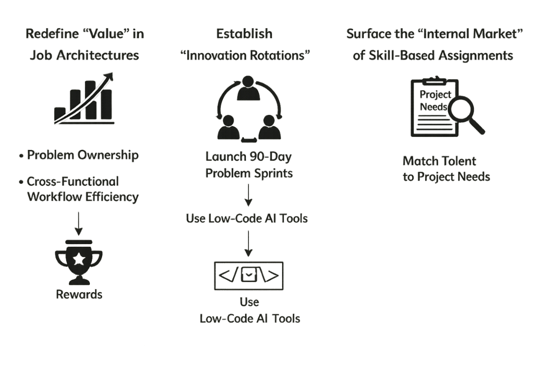

To move beyond silos, HR must dismantle the structural incentives that protect them. When you anchor promotions and bonuses strictly to individual department KPIs, you are explicitly discouraging the cross-functional experimentation required to build modern, AI-integrated workflows. Here’s how to transform job architectures for innovation and efficiency:

Redefine "Value" in Job Architectures: Stop measuring success solely based on task completion within a job description and shift to "Problem Ownership" as well as “Cross-Functional Workflow Efficiency” metrics. If a marketing manager identifies a manual data-processing bottleneck, they should be rewarded for automating it, even if that workfalls outside their functional area.

Establish "Innovation Rotations": Most employees stay in their silos because they lack the permission to work outside of them. Launch 90-day "Problem Sprints" where employees from different functions work together to solve a specific friction point using low-code/no-code AI tools.

The "Internal Market" of Skill-Based Assignments: Move away from static role titles. Surface "Project Needs" rather than "Role Openings." This allows a finance analyst with prompt-engineering skills to contribute to an engineering-led automation project without needing to leave their home department.

When you treat roles as permeable membranes rather than rigid containers, you stop managing headcount and start managing a dynamic, self-optimizing portfolio of talent. The goal is to move from a rigid structure to a fluid network where problems are "solved" by the person closest to the friction.

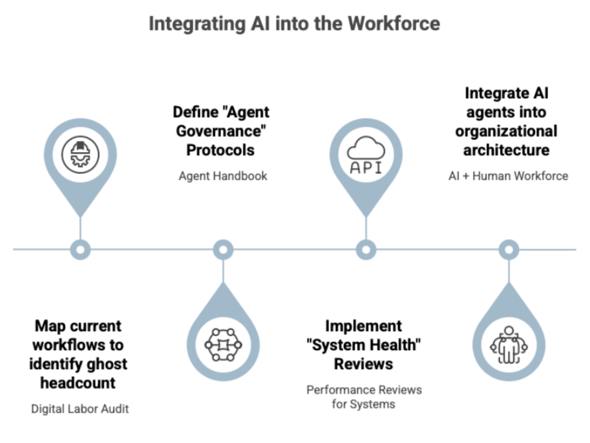

Here's something worth sitting with for a moment: the workforce you're managing today is not entirely human. AI agents are already in the building. They're processing documents, monitoring compliance workflows, generating internal analysis, sitting inside operations even though your org chart still shows as fully human.

The gap between what the org chart says and what's actually operating is exactly where governance breaks down, because none of the frameworks HR built for human workforces translate to systems that run autonomously, at scale, around the clock, without the judgment constraints that come with human accountability. When an AI agent makes a consequential error in a compliance workflow, who owns that? When an AI agent consistently handles work that used to define someone's performance metrics, what does a fair performance review actually measure? These are far from philosophical questions, they're operational ones, and they're landing on HR's desk whether HR asked for them or not.

The organizations that get this right are now mapping where humans and AI systems are genuinely collaborating, defining accountability before something breaks, and building workforce infrastructure that reflects the organization today rather than the org chart drawn up three years ago. The urgency is real; according to the Betterworks 2026 survey, only 26% of HR leaders say they've been meaningfully involved in managing change and employee adoption of AI, which means for most organizations, the hybrid workforce is already here and HR is still largely watching from the sidelines.

To bridge this gap, HR must begin formalizing AI as a "co-collaborator." If an AI agent consistently handles 20 hours of compliance triaging, that capacity should be codified in your workforce planning exactly as you would a part-time employee. Here are some ways to move in this direction:

Do a Digital Labor Audit: Map your current workflows to identify "ghost headcount." Every time you find a process being executed by an AI agent, assign that agent an "Agent ID" in your HRIS. This forces visibility. If the agent manages data, who is the human co-collaborator ("Accountable Lead" for that agent’s output)? This creates a clear line of human accountability for autonomous actions.

Define "Agent Governance" Protocols: Just as you have an employee handbook, you need an "Agent Handbook." This should define the agent's scope, its access permissions, and two critical continuity protocols: first, a "Kill Switch:" what is the automated trigger for an immediate human takeover when the agent fails or behaves unexpectedly, and second, a manual fallback plan that keeps the workflow running even when the system is entirely unavailable. This second piece is not optional in regulated or high-stakes environments. In healthcare, for instance, a patient intake or compliance workflow that depends entirely on an AI agent with no human-operable fallback isn't a modern system, it's a single point of failure dressed up as one.

Conduct Performance Reviews for Systems: Stop evaluating only people. Implement "System Health Reviews." If an AI agent has a 15% error rate in data extraction, that is a performance issue that requires a "coaching" intervention.

Integrate AI Agents: Integrate AI agents into your organizational architecture to start managing a high-performance AI + Human workforce. When you treat systems as co-collaborators, you can scale output without linearly scaling headcount.

Underwriting is another area where AI is reshaping how work gets done.

The process is inherently document-heavy, requiring teams to review applications, financial statements, and supporting records. This creates friction: manual effort, data entry errors, and slow turnaround times.

Large language models address this directly.

They can extract, organize, and summarize information across documents, reducing the need for manual processing. This improves both speed and consistency, especially in high-volume environments.

On the decision side, machine learning models help assess risk by learning from historical outcomes, such as whether loans were repaid.

But this introduces its own complexity. Most applicants are creditworthy, which means models must be carefully designed to handle imbalanced data and avoid biased predictions.

Importantly, AI does not replace the underwriter.

Instead, it supports decision-making by highlighting risk factors, surfacing relevant information, and improving efficiency. Final judgment remains with humans, particularly in high-stakes or regulated contexts.

Here is something that gets lost in almost every AI conversation: AI is only as good as the people behind it. People are not just the users of these systems, they are the enablers, and in most cases, they are the source of the data that makes AI run at all. Strip away the human layer and you don't have a more efficient organization, you have an expensive system producing outputs no one knows how to act on. That is why HR sits at the heart of AI transformation, not at its edge, and why the work of building literacy, redesigning career paths, and governing human-AI collaboration isn't merely a support function, it is the transformation itself.

By shifting from measuring siloed performance to cross-department collaboration and treating AI literacy as requirements for all employees, you cultivate a workforce that naturally absorbs change rather than resisting it.

However, to truly unlock your organization’s potential, you need to redefine advancement, shifting from rewarding process adherence to rewarding system evolution.

The foundation has been laid: role boundaries are becoming permeable, the workforce now includes systems as well as people, and the organizations moving fastest are the ones that stopped waiting for a perfect plan and started treating adaptability as infrastructure. But foundation alone doesn't compound. In the next article, we dig into the rebuilding work: what AI literacy actually looks like when it's treated as organizational infrastructure rather than a training program, how career progression needs to be redesigned to reward the employees who improve systems rather than just operate within them, and what a 90-day activation plan looks like for an HR team that wants to move from diagnosis to measurable capability shift, without waiting for a transformation budget to get started.

Looking to apply AI in HR but not sure where to start?

Reach out here to learn more or discuss your next project.

AI has never been more accessible. In just the past few years, organizations have gone from experimenting with machine...

May 8, 2026

In Part 1 of this series, I covered how the primary constraint on AI-driven performance isn't technology; it's the...

May 6, 2026

Most organizations today are investing heavily in AI. Executives rank it as a top priority. Investment is...

May 6, 2026